New in version 1.4.0.In pyspark, you might use a combination of Window functions and SQL functions to get what you want. Uses the default column name col for elements in the array and key and value for elements in the map unless specified otherwise. Returns a new row for each element in the given array or map. One option in pyspark is to set the config while creating the SparkSession: from pyspark.sql import SparkSession spark = SparkSession.builder \.

Find Your Bootcamp Match > lst = > lst(0) Traceback (most recent call last): File "", line 1, in lst(0) TypeError: 'list' object is not callable For an explanation of the full problem and what can be done to fix it, see TypeError: 'list' object is not callable while trying to …The answer of Madau is correct - what you need to do is to add mleap-spark jar into your spark classpath. We walk through two examples of this error in action so you learn how to solve it. In this guide, we talk about what the Python error means and why it is raised.

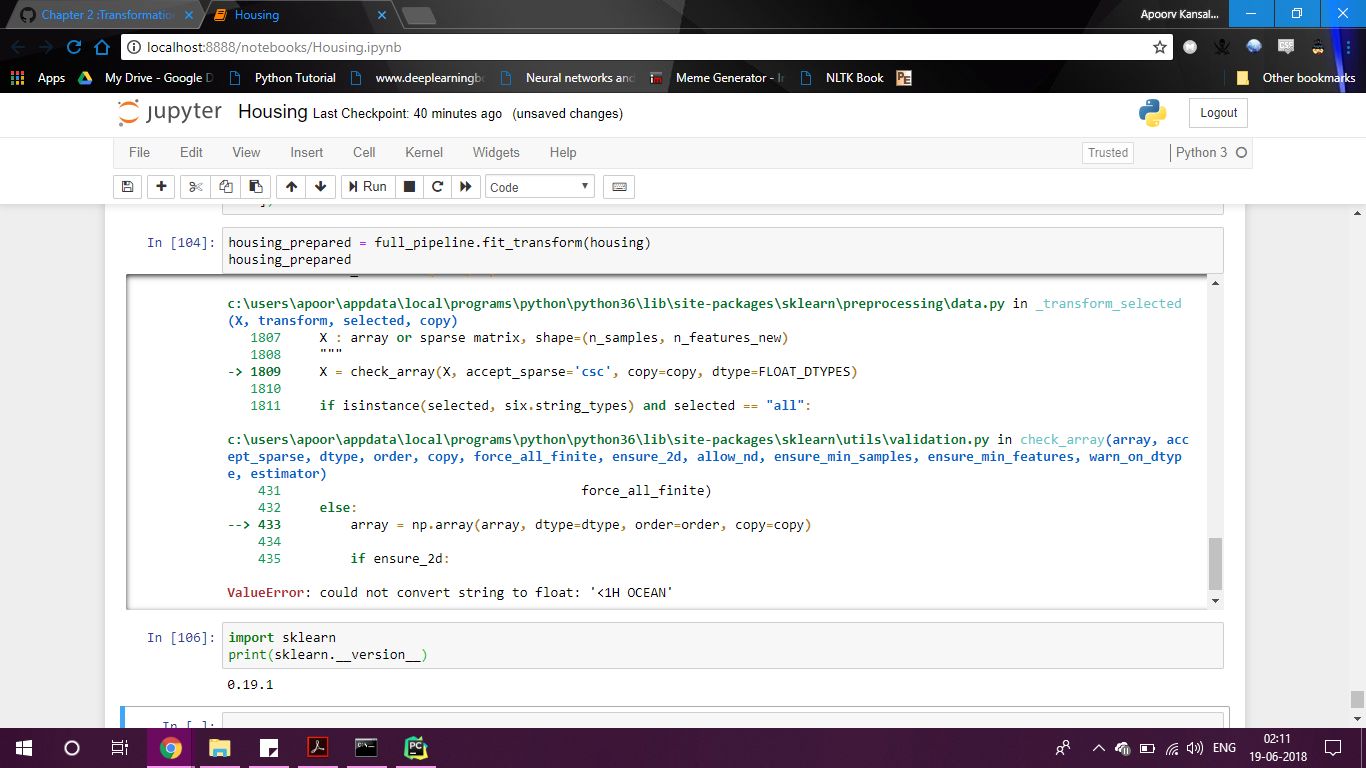

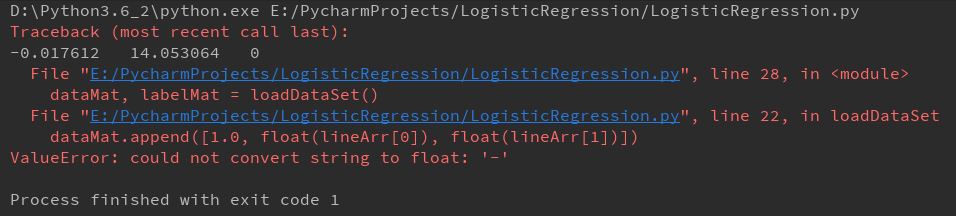

If you try to use the Python method with the same name in your program, “typeerror: ‘str’ object is not callable” is returned.It throws me error TypeError: 'Column' object is not callable at the 2nd line. I understand why "write ()" doesn't work - because DataFrameWriter object is. Instead of this: saveDF.write ().option ("header", "true").csv ("pre-processed") ("header", "true").csv ("pre-processed") if DataFrameWriter object is returned by all of these methods then why "write" works. It throws me error TypeError: 'Column' object is not callable at the 2nd line.You can convert a pyspark DataFrame to a pandas DataFrame using the toPandas method: pd_datadf = datadf.toPandas() pd_datadf then has all the methods you'd expect from a pandas DataFrame. A pyspark DataFrame doesn't necessarily have the same set of methods. A pandas DataFrame and a pyspark DataFrame are different things. You can convert a pyspark DataFrame to a pandas DataFrame using the toPandas method: pd_datadf = datadf.toPandas () pd_datadf then has all the methods you'd expect from a pandas DataFrame. Method-1: With annotationA pyspark DataFrame doesn't necessarily have the same set of methods. So, there are 2 ways by which we can use the UDF on dataframes. Solution 1 Spark should know the function that you are using is not ordinary function but the UDF. NoneType, List, Tuple, int and str are not callable. Only any form of function in Python is callable. But we are treating it as a function here. Here we are getting this error because Identifier is a pyspark column. TypeError’Column’ object is not callable.

Sum is an aggregate function and when called without any groups, it applies on. > result = spark_sum (filter_df * filter_df ) / spark_sum (filter_df ) This is a transformation function which returns a column and gets applied on dataframe we apply it (lazy evaluation). from pyspark.sql import Column # Create a column object col_obj = Column('column_name') # Use the column object in DataFrame operations df.select(col_obj) Conclusion A pandas DataFrame and a pyspark DataFrame are different things. This approach can help avoid the TypeError: 'str' object is not callable error.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed